Wan 2.7 Open Source: When It Drops and What It Means

I've been refreshing the Wan-Video GitHub page more than I'd like to admit this week. Which, honestly, tracks — I'm Dora, and obsessively testing AI video tools is kind of my whole thing. I went from editing 1–2 videos a day (and burning out on it) to running 5–10 pieces daily, and the only reason that's possible is because I stopped guessing and started actually tracking which tools hold up. I will write about what I find. No sponsorship spin, no hype — just what works in a real creator workflow.

So when Wan 2.7 dropped, it immediately went on my radar.

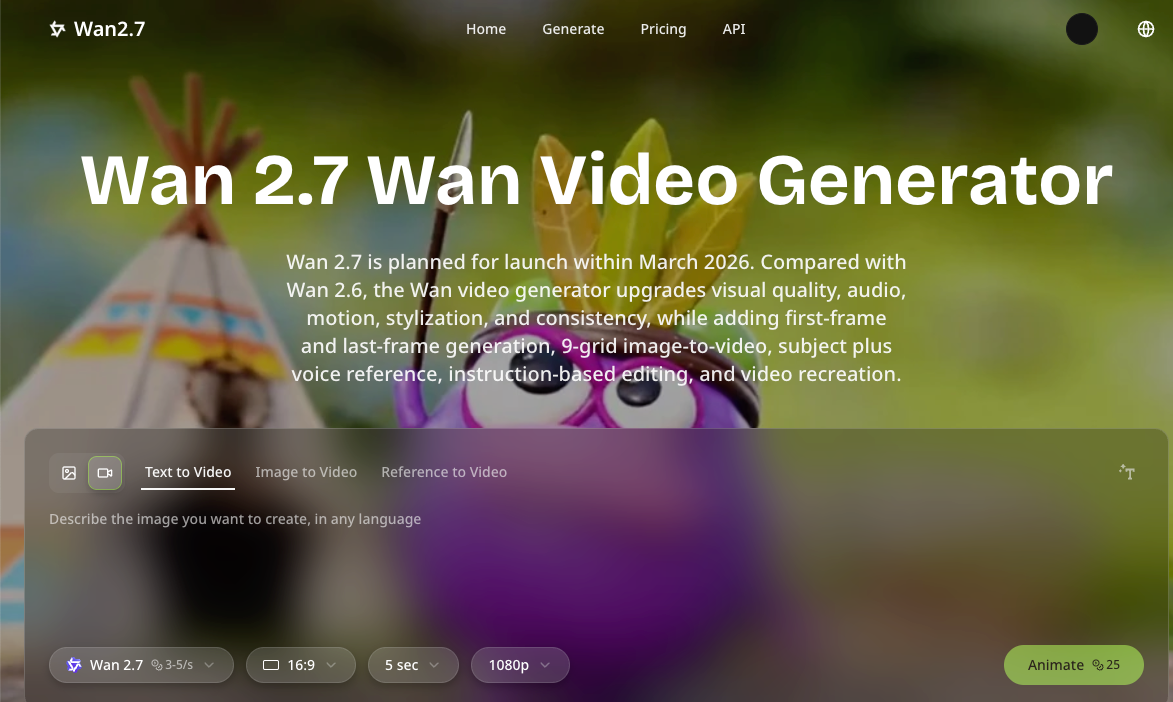

Released publicly in late March 2026, it generates 1080p video up to 15 seconds with text-to-video, image-to-video, and native audio output built in. But here's the question nobody's answering clearly: when do the actual weights drop? And more importantly — does it even matter to your workflow?

That's what this piece is about.

Wan's Open Source Pattern — What History Tells Us

Here's something that confused me when I first started tracking this model family: Alibaba doesn't open-source at launch. They never have.

Wan 2.1 launched in February 2025 and was released under the Apache 2.0 license — but that happened after the cloud version had been live for weeks. Same story with Wan 2.2. Wan 2.2 was released under Apache 2.0, allowing anyone to use it, modify it, and build commercial applications with it — no subscription fees, no API rate limits. But again, cloud first, weights later.

The pattern is consistent: cloud launch first, open weights 4–8 weeks later. Based on the consistent pattern of the Wan family, expect cloud launch first, then open weights within 4–8 weeks — pointing to mid-to-late Q2 2026.

So if you're waiting to build a local pipeline around Wan 2.7, you're probably looking at May or June 2026. Not tomorrow. Plan accordingly.

What the Weights Release Actually Changes

This is where it gets interesting — because "open source" means completely different things depending on who you are.

For local deployment creators, the weights drop is everything. You get full control: custom LoRA training, ComfyUI integration, no per-second billing. With Wan 2.2, anyone could train a LoRA on a few dozen images of a character or style, then load it to ensure consistent appearance across multiple generated videos. Expect the same thing with 2.7 — once weights are available.

For API-first developers, honestly? The weight release is less urgent. An API-first release gives developers tighter commercial control, smoother scaling, and a more direct path into enterprise workflows. If you're already shipping product on top of the cloud version, you might not need the weights at all.

For casual creators using the web interface, the open-source release is almost irrelevant to your day-to-day. You'll keep using wan.video or whatever platform you're already on, and the underlying model swap will be invisible.

The real beneficiaries of the open weights are the builders — the people running ComfyUI locally, training custom characters, building automated batch pipelines.

Cloud Version vs Open Source: What's Different for Creators?

Let me be direct about the trade-offs, because the marketing around "open source" can feel misleading.

Price: Cloud API access costs money per second of generated video. Open weights cost you electricity and hardware depreciation. If you're generating at scale — say, 10+ videos a day — local deployment can pay for itself within weeks.

Speed and access: Cloud is instant. No setup, no VRAM headaches, no driver updates. For solo creators who just need output, cloud wins on friction.

Customizability: This is where open weights genuinely shine. The ComfyUI community develops custom nodes for Wan 2.6 integration, providing visual interfaces for parameter adjustment, batch processing, and workflow automation. Once Wan 2.7 weights are public, expect the same ecosystem to spin up fast — custom LoRAs, community workflows, efficiency optimizations.

Commercial licensing: The Wan 2.2 series models are based on the Apache 2.0 open source license and support commercial use — you can freely use, modify, and distribute these models, including for commercial purposes, as long as you retain the original copyright notice. If 2.7 follows suit (likely), this matters enormously for anyone building a product on top of it.

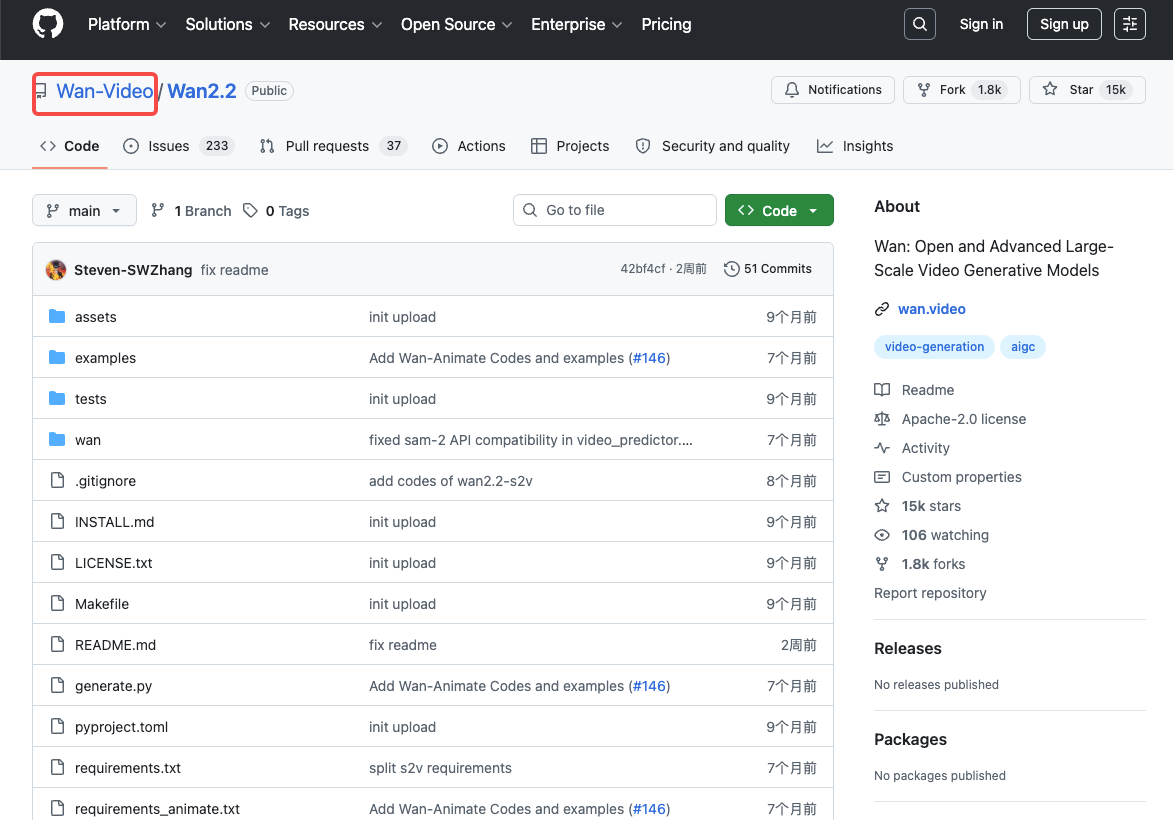

What to Watch for on the Official GitHub

Bookmark the Wan-Video GitHub organization now. That's where weights have dropped for every previous version, and there's no reason to expect 2.7 to be different.

Specifically, watch for:

Model weights format — previous releases came as separate DiT, T5 encoder, and VAE checkpoints. The 14B text-to-video model is approximately 69GB total: DiT weights around 57GB, T5 encoder around 11GB, and VAE around 0.5GB. Know what you're downloading before you start.

ComfyUI-WanVideoWrapper updates — Kijai's wrapper has been the community's go-to for every Wan release. Thanks to its Wan-only focus, it's on the frontline of getting cutting-edge optimizations and hot research features. When 2.7 drops, this repo will update within days.

The 9-grid I2V parameter schema — the exact API parameter structure for grid inputs had not been formally published as of this writing; if you're building automated workflows around this feature, confirm the endpoint schema before finalizing your pipeline.

Hardware Requirements (Expected)

Nobody has officially confirmed Wan 2.7 VRAM requirements yet. But we can make reasonable estimates based on 2.2.

The Wan 2.1 14B model requires 65–80GB of VRAM at 720p — that rules out every consumer GPU including the RTX 5090 (32GB). At full precision, that's datacenter territory.

But here's where FP8 quantization changes everything. FP8 reduces VRAM by roughly 20–40% versus BF16 at a minor quality cost. For the 14B model at 720p, this is the difference between fitting on an H100 PCIe and exceeding it.

For consumer GPUs, GGUF quantization has become the practical solution. With GGUF quantization and aggressive CPU offloading, Wan 2.2 14B can run on 6GB VRAM cards like the RTX 3050 and 3060 — though generation times are significantly longer.

My rough expectation for Wan 2.7: similar baseline requirements to 2.2, possibly higher due to new features. If you have an RTX 4090 (24GB), you'll be able to run it with FP8 quantization at reduced resolution. Full 1080p locally will still require a more serious rig or cloud.

FAQ

Q1: When will Wan 2.7 weights be released?

No official date confirmed yet. Based on the 4–8 week pattern from previous releases, mid-to-late Q2 2026 is the most reasonable estimate. Watch the Wan-Video GitHub for the announcement.

Q2: Is Wan 2.7 expected to be Apache 2.0 like previous versions?

Highly likely but not confirmed. Earlier versions Wan 2.1 and Wan 2.2 were open-sourced under Apache 2.0 on GitHub, allowing instant ComfyUI integration and self-hosted runs. Whether 2.7 will follow the same path hasn't been officially confirmed yet. Keep an eye on the official repo for the license file.

Q3: What GPU do I need to run Wan 2.7 locally?

Based on Wan 2.2 benchmarks: an RTX 4090 (24GB) is the practical minimum for consumer hardware with FP8 quantization. For full-quality 720p without optimization tricks, you're looking at 80GB+ VRAM — meaning an H100 or multi-GPU setup. The community will likely release GGUF versions shortly after weights drop, which brings the bar down significantly.

Q4: Will the 9-grid I2V feature be available in open weights?

Almost certainly yes — previous Wan releases have included all core features in the open weights. The 9-grid layout enables more detailed scene composition and multi-angle reference for higher-quality video output. Whether the ComfyUI wrapper will support it at launch is a different question — give the community a week or two to build the nodes.

Q5: Where should I track the official release?

Three places: the Wan-Video GitHub organization, the ComfyUI-WanVideoWrapper repo by Kijai (which updates fast when new weights appear), and the official ComfyUI documentation which publishes native workflow guides for each Wan release.

So here's where I land on this: if you're building serious local workflows, the Wan 2.7 open weights release is genuinely worth waiting for. The 9-grid I2V and first-last-frame control are features that local builders will unlock in ways the cloud interface won't allow. But if you need output now, the cloud version is already solid. Don't stall a project waiting for weights that might be 6 weeks out.

Start with what's available. Build the habit of checking GitHub. When weights drop, you'll know within hours — the community moves fast.

Previous posts: